Description

- MLOps and AIOps Overview

Attribute MLOps AIOps Primary focus Model lifecycle: build, test, deploy, monitor IT operations: event correlation, anomaly detection, automation Primary users Data scientists, ML engineers, DevOps SREs, IT ops, platform engineers Core goals Reproducibility, CI/CD for models, governance Reduce MTTR, automate incident response, surface insights Key components Data versioning; model training; CI/CD; model registry; monitoring Log/metric ingestion; feature extraction; ML-driven correlation; runbooks Typical data Labeled datasets, feature stores, model artifacts Time-series metrics, logs, traces, topology data Maturity/tools Kubeflow, MLflow, TFX, Seldon Splunk, Moogsoft, Dynatrace, Elastic APM - Definition — MLOps: MLOps (Machine Learning Operations) is the set of practices and tooling that connects data science with software engineering and DevOps to reliably build, deploy, and manage ML models in production.

- Definition — AIOps: AIOps (Artificial Intelligence for IT Operations) applies machine learning and big-data techniques to automate and enhance IT operations such as anomaly detection, event correlation, and automated remediation.

- High-level difference: AIOps/MLOps focuses on the model lifecycle and reproducible ML pipelines, while AIOps focuses on operational observability and automating IT workflows using ML.

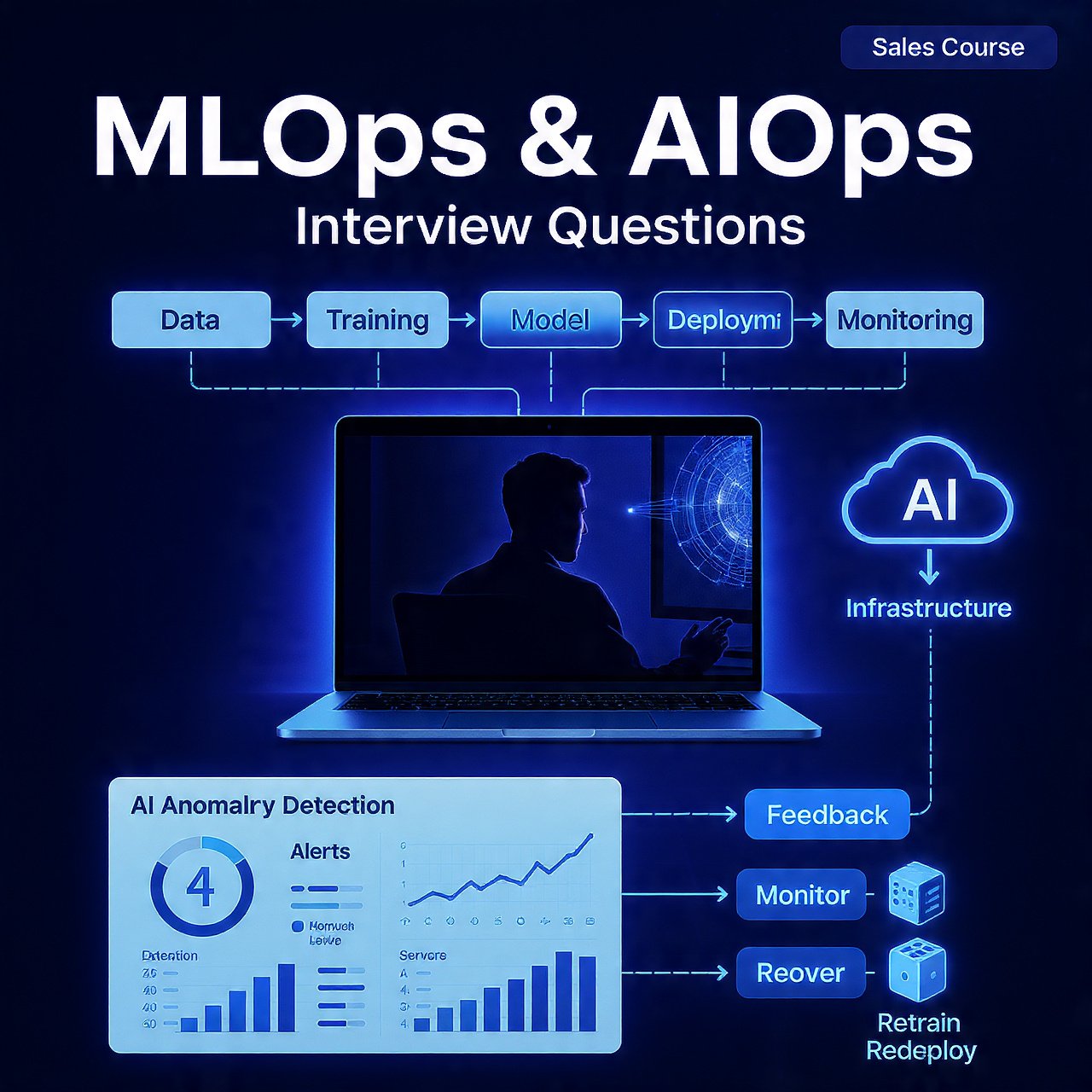

- Data management (MLOps): Core features include data versioning, lineage tracking, feature stores, and automated data validation to ensure training/serving parity.

- Model lifecycle (MLOps): Advanced MLOps adds automated retraining, canary/blue-green model deployments, model registries, and drift detection to keep models accurate and compliant.

- CI/CD for ML: AIOps/MLOps extends CI/CD with pipeline orchestration, reproducible environments (containers), and automated testing for data and models.

- Governance and compliance: MLOps implements model explainability, audit trails, access controls, and bias testing as production-grade requirements.

- Monitoring (MLOps): Production monitoring covers prediction quality, data drift, feature distribution shifts, latency, and resource usage with alerting and automated rollback triggers.

- Data ingestion (AIOps): AIOps platforms ingest diverse telemetry—logs, metrics, traces, events, and topology maps—and normalize them for ML-driven analysis.

- Core AIOps features: Typical features include anomaly detection, root-cause analysis, event correlation, noise reduction, and automated remediation playbooks to reduce alert fatigue and MTTR.

- Advanced AIOps capabilities: At scale AIOps adds causal inference, predictive capacity planning, automated change impact analysis, and closed-loop remediation integrated with orchestration tools.

- Integration patterns: Both disciplines require tight integration with CI/CD, observability stacks, orchestration platforms, and service catalogs to be effective in production.

- Automation and feedback loops: Mature implementations use closed-loop automation—models trigger actions and telemetry feeds back to retrain or refine rules and models.

- Scalability concerns: Production AIOps/MLOps must handle large datasets, distributed training, and model serving at scale; AIOps must process high-volume streaming telemetry with low-latency inference.

- Tooling ecosystem: AIOps/MLOps tooling emphasizes experiment tracking, model registries, and serving frameworks; AIOps tooling emphasizes ingestion pipelines, correlation engines, and runbook automation.

- Organizational impact: AIOps/MLOps requires cross-functional collaboration between data science, engineering, and compliance; AIOps requires alignment between SRE, platform, and application teams to act on insights.

- Success metrics: MLOps success is measured by model accuracy in production, deployment frequency, and time-to-recovery for model issues; AIOps success is measured by reduced alert noise, faster incident resolution, and fewer manual interventions.

- Adoption advice: Start small with reproducible pipelines and telemetry collection, instrument for observability, add automated tests and monitoring, then iterate toward automated retraining (MLOps) or closed-loop remediation (AIOps).